OpenAI has introduced GPT-5.5, the latest version of its large language model powering ChatGPT and related developer tools. Rather than focusing on dramatic new features, GPT-5.5 is largely about refinement: improving reasoning, reducing incorrect answers and making interactions feel more natural and consistent.

According to OpenAI’s announcement, the model improves coding performance, handles longer and more complex prompts more reliably and delivers better multimodal understanding across text and images. OpenAI also says hallucinations — where AI confidently generates incorrect information — have been reduced compared with earlier models.

One of the more significant changes is how OpenAI is handling reasoning tasks. GPT-5.5 appears designed to dynamically balance response speed with deeper analysis, rather than relying on separate “fast” and “reasoning” models. In practice, this should mean fewer situations where users need to manually select different AI modes depending on the task.

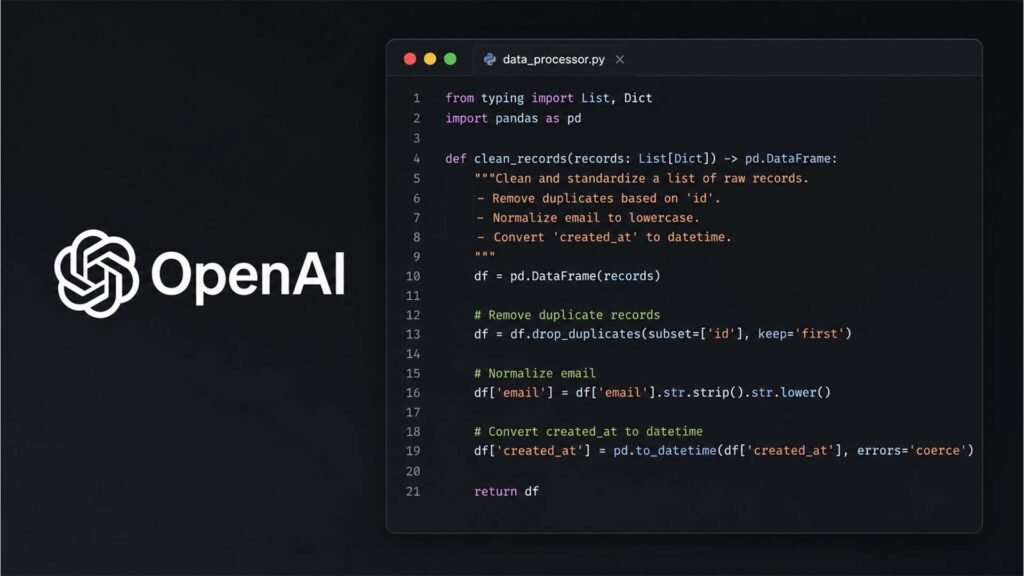

Coding remains a major focus. OpenAI claims GPT-5.5 performs better at debugging, repository-scale code understanding and following complex software instructions. That reflects the growing use of generative AI as a development tool rather than simply a conversational assistant.

For everyday users, the changes may be more subtle. GPT-5.5 is intended to produce shorter, clearer responses with less repetitive phrasing and fewer overly verbose explanations. OpenAI is also continuing to expand memory and context features, allowing the system to reference previous chats, uploaded files and connected services more effectively.

The release comes amid increasing competition in the AI space, with companies including Anthropic, Google and xAI all rapidly improving their own reasoning-focused models. As a result, the focus across the industry is shifting away from novelty and toward reliability, accuracy and practical usefulness.

For technically minded consumers, GPT-5.5 looks less like a major reinvention and more like a maturity update — a model aimed at making AI tools more dependable in day-to-day use rather than simply more impressive in demonstrations.